The first China fashion brand makeup culture, the niche brand bibididi, vowed to be a popular fashion brand.

When buying a new energy vehicle, what is the most important thing? Battery life? Intelligent? Configuration? These are of course very important, but what is more important is safety and quality.

With the technological development of new energy vehicles, both battery life and energy efficiency have been greatly improved. Therefore, today’s battery life anxiety is no longer the biggest anxiety of electric vehicle users, but the biggest anxiety is "safety anxiety".

According to the latest data released by the emergency management department, the spontaneous combustion rate of new energy vehicles rose by 32% in the first quarter of last year alone, and an average of 8 new energy vehicles caught fire (including spontaneous combustion) every day. It can be seen that since the popularization of new energy, there have been endless news about spontaneous combustion and other safety accidents. Because of this, consumers will be more concerned about the safety of new energy vehicles.

So, in the face of users’ safety anxiety about new energy vehicles, how should car companies solve the problem? As a "national team player", Hongqi’s approach can be described as "simple and crude" and highly credible. That is, through various extreme challenges, the safety performance of new energy vehicles in extreme environments has been fully verified.

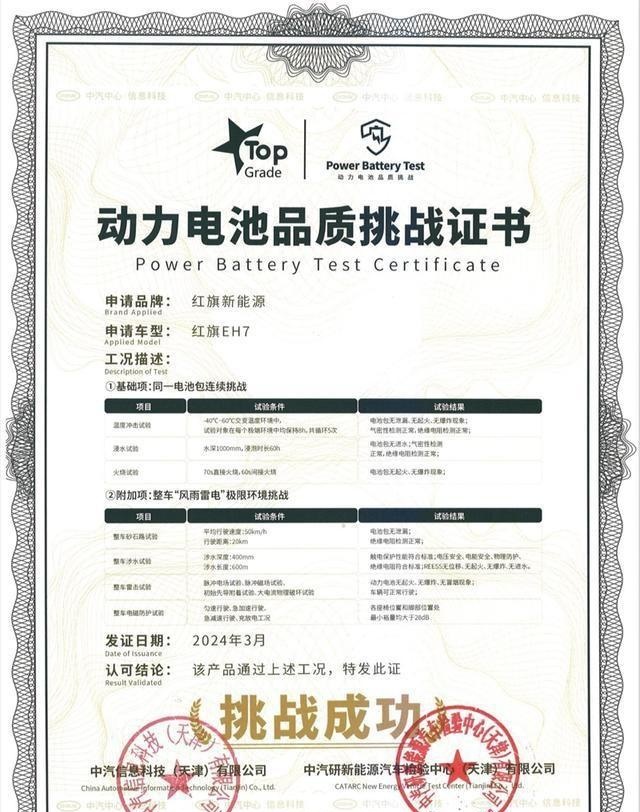

Without fear of "wind, rain, thunder and lightning" and "fire", Hongqi EH7 sets a safety standard

In order to allay users’ anxiety about the safety and quality of new energy cars, as the first pure electric car based on the FMEs super architecture of the Hongqi brand, the Hongqi EH7 has successfully passed the "wind and rain" power-free limit challenge.

In this challenge, in order to verify the safety of the self-developed battery pack carried by Hongqi EH7 in extreme weather environments, a test was specially designed to continuously challenge the temperature shock, water immersion and fire of the same battery pack in Hongqi.

The first is the temperature shock test, which is mainly to test the ability of the power battery to withstand rapid changes in ambient temperature. In a confined space, the temperature needs to complete the extreme change from -40 ° C to 60 ° C within 30 minutes, and then maintain it for nearly 8 hours. And this process has to be repeated five times.

In this harsh environment that did not exist on earth, almost all equipment would be damaged, but the battery pack of Hongqi EH7 "survived" unharmed, and the battery pack did not leak, break, catch fire, or explode.

After experiencing high temperatures and severe cold, the battery pack of the Hongqi EH7 was immersed in deep water for another 60 hours.

The current waterproof standard of the power battery is mainly IP67 (the power battery can be placed in 1 meter deep water for 30 minutes without being affected), while the water immersion test of the Hongqi EH7 is to directly immerse the battery pack into a 1 meter deep pool and continue to soak 120 times the national standard time – 60 hours. After the test, it was found that there was no water stain inside the box, indicating that the battery pack was airtight and well insulated. The strength of the Hongqi EH7 battery pack proved that it reached the highest waterproof level IPX8 in the current waterproof experiment.

After going through three limit tests of temperature change, water immersion, and fire, Hongqi EH7 still did not have any safety problems. However, do you think this is the end? Next, Hongqi EH7 went through four tests of sand and gravel, wading, lightning strike, and electromagnetism.

Sand and gravel road, Hongqi EH7 cycled 20km at an average speed of 50km/h, the battery pack was not damaged, the insulation resistance test was normal, and the vehicle was still running normally. In the wading scene in simulated rainstorm weather, Hongqi EH7 traveled 600m back and forth in a wading pool with a water depth of 400mm (4 times the national standard), and the vehicle passed perfectly.

In the electromagnetic test process, in a magnetic field with a frequency band of 10Hz-400kHz, the electromagnetic radiation level of Hongqi EH7 under uniform speed, acceleration and other operating conditions is tested, and the margin is greater than 28dB (the larger the margin, the less harmful the vehicle radiation), which is at the leading level in the industry.

The most striking thing is that the Hongqi EH7 also carried out a lightning strike test. After undergoing the triple lightning strike test of applying a pulsed electric field, generating a discharge effect and a continuous discharge effect, the vehicle did not appear abnormal and could still drive normally. The Hongqi EH7 is also the first pure electric vehicle in China to publicly challenge the lightning strike test and successfully pass.

Overall, the power battery of the Hongqi EH7 can resist freezing, overheating, water ingress, spontaneous combustion, and bumps. At the same time, the safety performance of the entire vehicle in extreme scenarios is also very excellent, which intuitively eliminates users’ concerns about battery safety.

How did Hongqi EH7’s "Incorruptible Body of King Kong" come into being?

Lu Xun once said: "A true warrior dares to face a bleak life!" And a truly safe battery should face a cruel trial.

It can be seen that the test of Hongqi EH7 is very rigorous. The previous three tests: temperature change, water immersion, and fire are all completed by the same battery, which is a great test for the battery.

It should be known that lithium batteries are extremely "sensitive" to temperature. Overheating may lead to combustion or even explosion, and overcooling may cause internal lithium dendrites to grow, pierce the film and cause short circuits and other risks. The Hongqi EH7 battery pack can complete these extreme challenges, mainly because the Hongqi EH7 battery pack adopts the six-sided three-dimensional insulation protection scheme developed by Hongqi, which creates a six-sided three-dimensional insulation protection space for the battery, realizes multi-scene non-thermal diffusion, the highest-level IPX8 waterproof, fire protection and other functions, ensuring that the battery can be used safely and normally in the face of various extreme environments.

However, only excellent batteries are not enough, Hongqi EH7 vehicle passive safety is also very good, using 9H4M cage structure to strengthen body safety, high-strength steel consumption is 74%, of which ultra-high-strength hot forming boron steel consumption reached 22%, easily meet the C-NCAP and E-NCAP double five-star safety rating standards, to prevent vehicle collisions or rollover extrusion of the battery, to ensure the integrity of the battery, for safety up the ante.

More importantly, these extremely rigorous test projects are not designed to fill up the "program effect" and show off the strength of the gimmick, but to truly face the pain points of users in new energy vehicles, and solve the multiple concerns of users on vehicle safety through continuous investment and deep digging in technological innovation. This is what all new energy vehicle companies should follow and learn.

"Zero Pressure, Enjoy Peace of Mind", Open the Era of Green Travel "Zero Pressure"

The "zero voltage" travel of Hongqi EH7 is not only reflected in the safety and quality of the vehicle, but also provides users with "zero voltage" car purchase and use value.

In the context of the current industry-wide price war, Hongqi EH7 did not follow up blindly, but extended its focus from a single purchase link to the user value of the product’s entire life cycle. Launched the "Zero Voltage · Peace of Mind" plan, including the three sub-plans of "Zero Voltage Four Lifetime" service, "Zero Voltage Worry-Free GO" rights and interests, and "Zero Voltage Limited Time Jump" product upgrade. Among them, the "Zero Voltage Four Lifetime" service adds "Lifetime Free Maintenance" to the original three after-sales rights of Hongqi brand: "Lifetime Free Warranty", "Lifetime Free Rescue", and "Lifetime Free Car Pickup and Delivery", which further alleviates the worries of new energy users.

If calculated in cash value, Hongqi EH7’s "Zero Pressure · Peace of Mind" plan allows users to enjoy up to 100,000 yuan. This unique "pet powder" method not only allows users to obtain real benefits, but also enhances the use experience of the whole life cycle of the product. More importantly, it is a demonstration of returning to correct values for the whole industry.

It can be seen that in the face of the crazy price of the industry, Hongqi EH7 does not blindly follow up the volume price, but the volume value. One is the safety value. Hongqi EH7 improves the safety factor of the battery and the whole vehicle in multiple dimensions through the pioneering and cutting-edge safety technology, and brings users the safety quality of "zero voltage". The second is the product value of the volume. The longest battery life is 820km, zero hundred acceleration for 3.5 seconds, 5 millimeter wave radars, all-aluminum chassis, and the maximum speed of the motor is as high as 22500r/min…. Hongqi EH7 has reached the flagship level of the same level in terms of performance and intelligence. More importantly, while having excellent product strength, Hongqi EH7 provides users with the rights and interests of value 100,000.

This kind of volume method not only allows users to have a high "sense of gain", but also does not harm the interests of the enterprise, allowing the enterprise to achieve healthy development, which can be described as brilliant!

(This article is originally produced by Wenwu Lane New Media Studio. Please indicate the source when reprinting: Wenwu Lane. The author of this article: Qingfeng, personal opinion, for reference only)

Xinhua News Agency, Beijing, June 7-The 29th session of the 13th the National People’s Congress Standing Committee (NPCSC) held its first plenary meeting on the afternoon of the 7th, and heard a report on the deliberation results of the People’s Republic of China (PRC) Foreign Sanctions Law (Draft) made by Shen Chunyao, vice chairman of the Constitution and Law Committee of the National People’s Congress.

The reporter learned from the office of the spokesman of the Legal Affairs Commission of the National People’s Congress Standing Committee (NPCSC) that for some time, some western countries used various pretexts, such as Xinjiang and Hong Kong, to spread rumors and slander against China, especially in violation of international law and the basic norms of international relations, and imposed so-called "sanctions" on relevant state organs, organizations and state workers in China according to their own laws and grossly interfered in China’s internal affairs. The China government severely condemned this hegemonic act, and people from all walks of life expressed strong indignation. In order to resolutely safeguard national sovereignty, dignity and core interests, and oppose western hegemonism and power politics, since the beginning of this year, the China government has repeatedly announced the implementation of corresponding countermeasures against entities and individuals in relevant countries. "Deal with a man as he deals with you". Around the "two sessions" this year, some NPC deputies, Chinese People’s Political Consultative Conference members and people from all walks of life put forward opinions and suggestions, arguing that it is necessary for the state to formulate a special anti-foreign sanctions law to provide strong support and guarantee for China to counter foreign discriminatory measures according to law. The Work Report of the NPC Standing Committee approved by the Fourth Session of the 13th National People’s Congress clearly stated in the "Main Tasks for the Next Year" that the legal "toolbox" for coping with challenges and preventing risks should be enriched around anti-sanctions, anti-interference and anti-long-arm jurisdiction.

According to the relevant work arrangements, the Legislative Affairs Committee of the National People’s Congress Standing Committee (NPCSC) carefully studied the legislative suggestions put forward by various parties, summarized China’s counter-measures practice and related work practices, sorted out the relevant foreign legislation, solicited the opinions of the central and relevant state departments, and drafted and formed the People’s Republic of China (PRC) Anti-Foreign Sanctions Law (Draft). In April this year, Chairman the National People’s Congress Standing Committee (NPCSC) put forward a legislative motion in accordance with legal procedures, and the draft anti-foreign sanctions law was considered for the first time at the 28th meeting of the 13th the National People’s Congress Standing Committee (NPCSC). Members of the Standing Committee are generally in favor of enacting the anti-foreign sanctions law, and at the same time, they have put forward some perfect opinions and suggestions. The Constitution and Law Committee of the National People’s Congress revised and improved the draft anti-foreign sanctions law according to the deliberation opinions of the Standing Committee and opinions from all sides, and submitted the deliberation result report and the second deliberation draft of the draft to the 29th session of the 13th the National People’s Congress Standing Committee (NPCSC) according to law.

Wechat and Alipay have been "following each other" recently. At the beginning of May, WeChat and Alipay announced their cooperation with Carrefour supermarket chains, and they will be able to pay with WeChat and Alipay wallet at Carrefour offline outlets. Prior to this, the two companies almost simultaneously announced the provision of urban service mobile platforms in 15 cities across the country, trying to "go hand in hand" in the field of public services. Similar time, similar service, following the taxi software, the two companies once again played a "catch-up" in the field of life service, so that users waiting for red envelopes will inevitably get excited. I wonder what the two companies are doing this time.

The IT Times reporter experienced the payment and service of Alipay and WeChat in the city on the spot to see whether it is "two brothers are good" or "life and death".

Mobile Payment: "Good Brothers" Against UnionPay

coverage area

Alipay: The whole family, Haode, Costco, Agriculture, Industry, Carrefour and many other supermarkets have begun to accept Alipay wallet scanning code payment.

Wechat Payment: Some retail supermarkets under Haodehe Agriculture, Industry and Commerce support it, and other supermarkets all said that it will take some time.

Conclusion: Alipay wins.

Entrance position

Alipay: the payment code is set in the first-level directory, and you can swipe the code when you open it.

Wechat payment: the entrance is deep, and you need to enter the secondary directory of WeChat-wallet to find the payment code. From opening the wallet, entering the wallet, entering the password to completing the payment, there are five steps to be completed.

Conclusion: Alipay wins.

User usage frequency

Observation location: Dragon Dream Carrefour Supermarket in Zhongshan Park

The eye-catching position of the cash register is affixed with the eye-catching Alipay payment logo, but the number of users who actively use Alipay is still a minority. The reporter observed for 5 minutes and found that most customers still use cash or bank card to pay by credit card. However, the supermarket staff introduced that Alipay wallets have discounts on weekends for three days, and 7 out of 10 customers will use Alipay wallets.

Conclusion "If there are discounts, there will be no interest" is a practical dilemma faced by retailers accessing Alipay and WeChat payment offline.

Offline team: Alipay has no ability to exclude WeChat payment

"WeChat is a hardware interface that gradually opened offline scanning code in the second half of last year. Many companies are still gradually improving their technical standards. Therefore, the promotion speed is slower than Alipay." Hangzhou Yiling Technology is one of the national agents authorized by Alipay. After promoting Alipay wallet for two years, they launched the offline promotion business of WeChat payment. In their view, as long as Alipay does not put forward "exclusive requirements", the more mobile payments, the better.

Agents have little interest in individual promotion and payment by bill.

As an Internet company, when Alipay develops offline business, except for some large enterprises such as Carrefour, most small enterprises use Alipay cashier, which is authorized to be promoted and operated by offline service providers.

According to the "IT Times" reporter, there are 8 first-level agents directly authorized by Alipay nationwide, and these agents have hundreds of second-level dealers nationwide to promote them. Through this huge network, the coverage of Alipay offline payment outlets has gradually matured.

"Our main business is to provide O2O solutions for offline shops. Offline receipt is only one of them. It is mainly service-oriented and it is basically difficult to make a profit." Mr. Sun, who previously promoted Alipay’s offline payment business in Zibo, Shandong Province, has now quit. "As a secondary agent, in the first year, the rate of 6‰ of merchants’ turnover was all put into Alipay accounts, and dealers could not take it. Only in the second year can there be a 37% or 46% share, but we are basically small and medium-sized merchants, and it is difficult to make a profit."

At present, most Alipay push teams rely on online solution packaging to make profits. The charging standard of an Alipay service window obtained by the reporter shows that the initial construction fee for creating a membership and marketing data analysis platform ranges from 5,600 yuan to 16,800 yuan, and then 20% of it will be charged as a service fee every year. These are the keys to the profitability of these offline enterprises.

The low rate of WeChat is more attractive to agents.

Since the second half of last year, WeChat Pay has gradually opened the hardware interface of offline payment. So far, there are only two or three service enterprises in China that have the ability to conduct offline scanning code payment of WeChat Pay, but these enterprises have basically had mature experience in the offline promotion of Alipay. "One of the conditions for the opening of WeChat payment is the technical ability to improve the interface, and the other is the offline outlet." A person from the first batch of Qiding Yunshang authorized by WeChat payment interface introduced that only companies that have done Alipay have the strength to pick up such conditions.

Although the offline promotion of WeChat payment started late, it has great support for the acquiring rate of core merchants, which is attractive enough for agents. "The rate of WeChat payment in the first year is 3‰, and in the second year, it is divided into 37%, the secondary agent takes seven, and the primary agent takes three. This is significantly greater than the current 4.8‰ support that Alipay gives to first-class agents. " Therefore, some enterprises choose to withdraw from Alipay promotion and invest in offline promotion of WeChat payment.

For traditional entity merchants, whether Alipay or WeChat payment, compared with the traditional bill collection mode, both of them have the advantages of real-time receipt and lower rate. "The traditional bill collection rate is 1%~1.2%, and Alipay and WeChat are much lower than this figure. The actual profit-making will encourage merchants to gradually change the cashier method." In the eyes of the industry, at this stage, whether Alipay leads or WeChat comes from behind, neither of them has the conditions for competition. "What they have to do now is to join hands with UnionPay to compete for the market."

Urban service: It is far from the time of competition.

Shanghai is a must for Alipay and WeChat to open pilot cities. At present, the number of services provided by the two companies is between 10 and 12, among which Alipay provides personalized "scenic spot booking" and "marriage registration reservation", while WeChat city services include "housing tax payment" and "tax invoice information inquiry". The rest, including traffic inquiries and payment of water, electricity and coal fees, are all the same services.

Wechat’s service page is displayed in a list, which is divided into two categories: government service and life service. There are several categories under each category, and the overall page style is relatively blunt. Alipay’s city service page is tiled with icons, and each function item is distributed in a tabular form, with a refreshing overall color scheme and style. Among them, Alipay’s weather conditions and traffic restrictions are directly displayed at the top, while WeChat "hides" this information in the sub-column under the life service category, with a picture of the city at the top.

When using some functions, WeChat "City Service" is obviously not smooth. For example, under the "medical service" project, WeChat only accesses the registered network, while Alipay also has several local hospitals. Enter the "quick appointment" of the registered network platform through WeChat. The hospitals displayed in the list cannot be opened, but they can be opened in Alipay for further operation.

In addition, both of them are connected to the library inquiry system in Shanghai. The reporter inquired about the book "Social Animals" on WeChat, and it was blank. However, when he inquired about the book through Alipay, there were three results. The reporter continued to inquire about several books and found that ordinary books such as "One Thousand and One Nights" and "Economics" could be found on WeChat, but slightly professional books might not be found.

In terms of living expenses, Alipay has more types of fees than WeChat, such as fixed-line broadband, cable TV, traffic violations and property fees. And you can save the manual input of the bill number by similar "sweeping".

As far as the current overall usage is concerned, Alipay’s "city service" response speed and fluency are better than WeChat’s. However, Yin Ting, the person in charge of the WeChat project in Shanghai, told the IT Times reporter that "the technology is gradually improving some functions of WeChat city service, such as applying the voice recognition bill number technology of PayPal to the living payment of’ city service’".

Offline competition: whoever builds the platform first wins in the end.

The government is open-minded and the two companies are fighting for speed.

"After accessing the two platforms, it has indeed achieved a good diversion effect, especially in the registration and appointment. In the past, a lot of time was wasted in queuing and receiving the form. Now some information can be completed directly on the mobile phone, and the appointment also makes us better. Arrange resources and be more efficient. If you apply for entry and exit, the queue time is now within 10 minutes. " Wang Jiwei, the head of Weibo and WeChat, told the IT Times reporter.

At present, Shanghai Public Security has connected some service functions of entry-exit management and traffic information to WeChat, and indirectly connected to Alipay’s platform through the "city service" platform paid by Sina Weibo. In a month or so, they have seen the positive effects.

Openness is the unanimous attitude of most functional departments that open electronic interfaces at present. In their view, as long as the interface is opened to WeChat within the allowable scope, there is no reason not to open it to Alipay, Weibo or Baidu, which is convenient for the public and improves the efficiency of the government, killing two birds with one stone, so under the premise of ensuring security, they do not rule out open cooperation with a number of similar Internet platforms. "The public security department has its own security mechanism and will open the corresponding interface only under the premise of ensuring security." Wang Jiwei said.

The government’s attitude is open, and the remaining problem is speed for Alipay and WeChat. Yin Ting told the IT Times reporter, "Many projects are being promoted in coordination with various commissions, but because the government needs time to divide manpower and funds, it is impossible to promote them too quickly. At present, some problems are being solved and services are gradually improving. " Ant Financial said that in addition to actively cooperating with government departments, they also set up a special team to discuss cooperation with major hospitals and other institutions. (The authors of this article, Zhang Weiwei and Zhang Cheng, were authorized by the IT Times to launch the titanium media network)

The bullet train that ran outside for 48 hours "went home" for maintenance. (Reporter wanglili)

Cctv news(Reporter wanglili) After 6: 30 pm every day, after 48 hours of running outside, the motor trains will return to Taiyuan EMU for routine maintenance and overhaul.

There are many departments in the EMU, such as electric workshop, communication workshop, dispatching and monitoring center, locomotive room, passenger room, cleaning room, etc. At this time, the work of Wang Xiaoxia, secretary of the Party branch of Taiyuan EMU on-board equipment workshop of Taiyuan Electric Section of China Railway Taiyuan Bureau Group Co., Ltd., who is responsible for the core equipment "train overspeed protection device" (hereinafter referred to as ATP), began.

On February 1st, the annual China Spring Festival travel rush was launched again. In the world’s largest population migration, which is expected to send 2.98 billion passengers, the number of passengers sent by railway is expected to reach 390 million.

As the first generation of emu repairman in China who grew up with emus, Wang Xiaoxia has been engaged in emu maintenance for eight years, and more than 4,000 emus have been overhauled by her hand.

"Taiyuan Railway Company has jurisdiction over five high-speed railway lines and 48 sets of motor trains. For high-speed motor trains with a top speed of more than 300 kilometers per hour, meticulous and rigorous maintenance work is the key to ensuring the safe operation of high-speed motor trains." Wang Xiaoxia said.

Maintenance of railway signalman changing to high-end bullet train

Wang Xiaoxia was born in a railway worker’s family. Living beside the railway since childhood, she has a natural affection for railway work. In 2007, after graduating from graduate school, she was assigned to taiyuan railway administration and became a railway signalman.

In 2009, Shitai Passenger Dedicated Line was opened, and the first batch of EMUs imported from taiyuan railway administration were put into operation. At that time, as one of the few female graduate students who overhauled high-speed rail equipment in the whole railway, Wang Xiaoxia changed from an ordinary signalman to a post to overhaul ATP of high-end bullet trains.

If the wheels of the emu are compared to the limbs and the engine to the heart, the on-board equipment is the brain, especially the ATP, the core component of the on-board equipment, is the central nervous system of the emu and can be called the black box of the emu.

In the interview, Kang Chunhui, deputy secretary of the Party Committee of Taifei Electric Power Department, said that ATP is responsible for receiving and processing a large amount of digital information, and the good operation and reliability of the equipment is equivalent to giving the EMU a "clairvoyance and clairvoyance". If there is a problem with ATP, the EMU will not be able to start running. Wang Xiaoxia’s workshop is responsible for the daily maintenance of ATP equipment of 48 operating EMUs, such as Daxi High-speed Railway, Shitaike Special Railway and Beijing-Guangzhou High-speed Railway.

"Brain Doctor" in the EMU

Wang Xiaoxia is testing high-speed trains. (Reporter wanglili)

At 18: 40 pm on February 1st, Taiyuan EMU Operation Station, a modern maintenance depot that can accommodate 12 high-speed trains at the same time, was brightly lit.

Driving during the day and overhauling at night are the processes of high-speed trains. The overhaul time is from 6 pm the day before to 7 am the next day, with the peak from 11 pm to 3 am the next day.

It is understood that the maintenance of a motor train needs the unified command of the dispatcher and can be completed within 2 hours under the coordinated operation of various positions.

"Nothing can go wrong in any link, just as nervous as fighting." Wang Xiaoxia’s work area is responsible for the ATP system maintenance of 24 motor trains. On the train, a set of cabinets called "system host" is the focus of their inspection. "It is the core equipment to ensure the safe operation of EMU. In case of abnormal situation, it will automatically alarm the driver. If the driver fails to follow the prompts, it will automatically control the train immediately to ensure the safe and stable stop of the train."

After the inspection on the train, Wang Xiaoxia has to drill into the inspection ditch to check the induction device at the bottom of the train. If you find something abnormal, deal with it urgently. If you can’t handle it, you can only detain the car the next day.

There are 40 employees in Wang Xiaoxia’s work area, all of whom are young guys and girls, who stay up all day and go out at night. Everyone jokingly said that they are "brain doctors" living "American time".

Now it seems that the work that is easy to master is far from so simple at the beginning. As a new thing that I just came into contact with in 2009, the equipment and testing equipment used on the train are all imported, and there are only several foreign languages that I need to understand. As a graduate student, Wang Xiaoxia is duty-bound to take on the responsibility of overcoming technical difficulties.

In the interaction with training teachers, manufacturers, motor train drivers and mechanics, Wang Xiaoxia gained more rich experience and practice. She said that "ensuring the safety of high-speed rail and passengers is foolproof" is not an abstract concept, but a concrete analysis, judgment, answer and guidance of all data.

"There are four types of ATP in the EMU attached to Taiyuan Bureau of High-speed Train, and the different types are very different. There are as many as 20 kinds of analysis and monitoring software needed for daily maintenance." Wang Xiaoxia said that their work is like running after the frontier of science and technology, and they dare not be sloppy and lax.

Innovation Studio specializes in treating "intractable diseases" of motor trains.

Wang Xiaoxia made a simulation in front of his own simulation test system. (Reporter wanglili)

Some time ago, there was a problem with the ATP system setting of a motor train about to leave the warehouse. According to the driver’s description, Wang Xiaoxia decisively asked the driver to manually modify the ATP system setting parameters. In less than one minute, the system settings returned to normal, the danger was eliminated, and the EMU was out of the library according to the established time.

Shanxi’s dry climate is prone to static electricity, which makes ATP equipment accessories and other precision equipment prone to problems or damage.

"At that time, there was no regular testing equipment for these spare parts in the whole road. Once the ATP failure required replacement of spare parts, I didn’t know whether the replaced spare parts were qualified." Kang Chunhui said that in order to solve this problem, Wang Xiaoxia independently developed the indoor simulation test system for on-board equipment of EMU, which filled the blank of indoor simulation test system for on-board equipment without train control.

Wang Xiaoxia’s two inventions not only solved the problem of periodic power-on test for spare parts of EMU, but also increased the training opportunities for employees to solve "intractable diseases" in practical operation. What is more impressive is that the two systems can generate direct economic benefits of about 10 million yuan per year.

With the arrival of Spring Festival travel rush, Wang Xiaoxia’s work became busier. During the day, she focused on management and business skills, and at night, she followed her classes to solve various problems at any time and place. In the interview, she said: "For the high-speed train, even a screw is a big deal, especially the ATP is related to the safety of the EMU. What they have to do is to ensure the safety of the train and let every passenger go home safely. "

Core Tip: As a key technology to lead a new round of scientific and technological revolution and industrial transformation, the generative artificial intelligence represented by ChatGPT has constantly spawned new scenarios, new formats, new models and new markets, changed the mode of production of information and knowledge, and reshaped the interaction mode between human beings and technology, which has a great impact on education, finance, media and games. In this context, countries around the world have introduced artificial intelligence development strategies and specific policies to seize the strategic commanding heights. At the same time, the security risks exposed by generative artificial intelligence, such as data leakage, false content generation and improper use, have also attracted wide attention from all countries. The development, application and governance of generative artificial intelligence is no longer a common challenge faced by a certain country but the whole international community.

[Abstract] As a key technology to lead a new round of scientific and technological revolution and industrial transformation, the generative artificial intelligence represented by ChatGPT has constantly spawned new scenarios, new formats, new models and new markets, changed the mode of production of information and knowledge, and reshaped the interaction mode between human beings and technology, which has a great impact on education, finance, media and games. In this context, countries around the world have introduced artificial intelligence development strategies and specific policies to seize the strategic commanding heights. At the same time, the security risks exposed by generative artificial intelligence, such as data leakage, false content generation and improper use, have also attracted wide attention from all countries. The development, application and governance of generative artificial intelligence is no longer a common challenge faced by a certain country but the whole international community. In order to effectively deal with the new challenges of generative artificial intelligence to information content governance, we should balance the relationship between security and development, the relationship between technological innovation and technological governance, and the relationship between corporate compliance obligations and corporate affordability.

Keywords: multidimensional balance of internal and external challenges in the legislation of generative artificial intelligence governance model [Chinese library classification number] D92 [document identification code] a

Generative AI (Generative AI) is a new technology in the development of artificial intelligence, which can generate new content according to probability by statistical methods, such as video, audio, text and even software code. By using Transformer (a neural network model based on self-attention mechanism), generative artificial intelligence can deeply analyze the existing data sets, identify the location connection and correlation among them, and then continuously optimize and train with the help of feedback reinforcement learning from human feedback to form a large language model, and finally make decisions or predictions for the generation of new content. ① In addition to text generation and content creation, generative artificial intelligence also has a wide range of application scenarios, such as customer service, investment management, artistic creation, academic research, code programming, virtual assistance and so on. In a word, self-generation, self-learning and rapid iteration are the basic characteristics that distinguish generative artificial intelligence from traditional artificial intelligence.

The Interim Measures for the Management of Generative Artificial Intelligence Services (hereinafter referred to as the Interim Measures) officially issued by seven departments, including the National Network Information Office, came into effect on August 15, 2023, aiming at promoting the healthy development and standardized application of generative artificial intelligence and safeguarding national security and social public interests. As a key technology to lead a new round of scientific and technological revolution and industrial transformation, generative artificial intelligence represented by ChatGPT has constantly spawned new scenarios, new formats, new models and new markets, changed the mode of production of information and knowledge, and reshaped the interaction mode between human beings and technology, which has a great impact on education, finance, media and games. In this context, countries around the world have introduced artificial intelligence development strategies and specific policies to seize the strategic commanding heights. At the same time, the security risks exposed by generative artificial intelligence, such as data leakage, false content generation and improper use, have also attracted wide attention from all countries. It can be said with certainty that the development, application and governance of generative artificial intelligence is no longer a common challenge faced by a certain country but the whole international community.

Comment on the main supervision modes of artificial intelligence abroad

Artificial intelligence is a "double-edged sword", which promotes social progress, but also brings risks and challenges. The international community is committed to continuously promoting the development of artificial intelligence. For example, UNESCO adopted the first global agreement on artificial intelligence ethics-Proposal on Artificial Intelligence Ethics, which defined ten principles, including proportionality and non-harm, safety and security, fairness and non-discrimination. Since 2018, the EU has continued to promote the design, development and deployment of artificial intelligence, while striving to standardize the use and management of artificial intelligence and robots. The European Union’s Artificial Intelligence Act, which came into effect in early 2024, helped this work reach a climax and even became a milestone in the history of artificial intelligence governance. The United States pays more attention to the development of artificial intelligence, and regulates the development of artificial intelligence with the blueprint of artificial intelligence bill of rights (hereinafter referred to as the blueprint of bill of rights) as the main measure. In view of the relatively mature and representative measures of artificial intelligence governance in the European Union and the United States, the advantages and disadvantages of different regulatory models are discussed in the following, hoping to provide reference for the healthy development and effective governance of artificial intelligence in China.

EU artificial intelligence legislation: giving priority to safety and giving consideration to fairness. From the legislative history, in April 2021, the European Commission issued the legislative proposal "Regulations of the European Parliament and the Council on Formulating Uniform Rules for Artificial Intelligence (Artificial Intelligence Law) and Amending Some EU Legislation" (hereinafter referred to as the "Artificial Intelligence Act"), which opened the "hard law" road of artificial intelligence governance. In December 2022, the final version of the compromise draft of the Artificial Intelligence Act was formed. In June 2023, the European Parliament passed the draft negotiating authorization of the Artificial Intelligence Act and revised the original proposal. On December 8, 2023, the European Parliament, the European Council and the European Commission reached an agreement on the Artificial Intelligence Act, which stipulated comprehensive supervision in the field of artificial intelligence. On the whole, the Artificial Intelligence Act establishes an ethical and legal framework for the development and use of artificial intelligence in the European Union, supplemented by the Directive on Responsibility for Artificial Intelligence to ensure its implementation. Several rounds of discussions on the Artificial Intelligence Act mainly focused on the following contents:

The first is the definition of artificial intelligence and the scope of application of the bill. Article 3 of the Artificial Intelligence Act defines artificial intelligence as software developed by one or more technologies and methods, which can affect the output of interactive environment (such as content, prediction, suggestion or decision) and achieve specific goals specified by people. This definition has a wide range, which may cover a large number of software that is not traditionally regarded as artificial intelligence, which is not conducive to the development and governance of artificial intelligence. Therefore, the current version limits the definition of artificial intelligence to "a system based on machine learning or logic and knowledge", which aims to run at different levels of autonomy and can influence the output of prediction, suggestion or decision-making in physical or virtual environment for explicit or implicit goals. At the same time, Annex I and the authorization of the European Commission to modify the definition of artificial intelligence were deleted. Although the Artificial Intelligence Act does not involve generative artificial intelligence, the appearance of ChatGPT makes legislators add definitions of general artificial intelligence and basic model in the amendment, and requires generative artificial intelligence to comply with additional transparency requirements, such as disclosing the source of content and designing models to prohibit illegal generation. The Artificial Intelligence Act has extraterritorial effect and applies to all providers and deployers of artificial intelligence systems (whether established in the EU or in a third country), all distributors and importers, authorized representatives of providers, manufacturers of certain products established or located in the EU, and EU data subjects whose health, safety or basic rights are greatly affected by the use of artificial intelligence systems.

The second is the supervision mode of artificial intelligence. The "Artificial Intelligence Act" adopts a risk-based approach, which classifies and sets different obligations according to the potential risks to health, safety and the basic rights of natural persons: first, unacceptable risks are prohibited from being deployed by any enterprise or individual; The second is high risk, which allows relevant subjects to put them on the market or use them after fulfilling their obligations such as prior assessment, and requires continuous monitoring during and after the event; The third is finite risk, which does not need to obtain a special license, certification or fulfill the obligations of reporting and recording, but should follow the principle of transparency and allow proper traceability and interpretability; The fourth is low risk or minimum risk, and the corresponding subject can deploy and use according to free will. As far as generative artificial intelligence is concerned, because it has no specific purpose and can be applied to different scenarios, it cannot be classified according to the general mode or operation mode, but should be based on the expected purpose and specific application field of developing or using generative artificial intelligence. ②

The third is the general principle of artificial intelligence. Specifically, it includes: human agency and supervision: the development and use of artificial intelligence system must be a tool to serve human beings, respect human dignity, individual autonomy and function, and be properly controlled and supervised by human beings; Technical robustness and security: the development and deployment of artificial intelligence should minimize accidents and unexpected damage, ensure robustness when unexpected problems occur, and be flexible when malicious third parties try to change the performance of artificial intelligence systems and illegally use them; Privacy and data protection: The artificial intelligence system must be developed and used according to the existing privacy and data protection rules, and at the same time, it must deal with data that meet high standards in terms of quality and integrity; Transparency: The development and use of artificial intelligence system must allow proper traceability and interpretability, make human beings aware of their communication or interaction with artificial intelligence system, and properly inform users of the capabilities and limitations of artificial intelligence and the rights enjoyed by the affected people; Non-discrimination and fairness: the development and use of artificial intelligence systems must include different participants, promote equal use, gender equality and cultural diversity, and avoid discriminatory influence and unfair prejudice prohibited by EU or national laws; Social and environmental well-being: Artificial intelligence systems should be developed and used in a sustainable and environmentally friendly way, benefiting everyone, while monitoring and evaluating the long-term impact on individuals, society and democracy.

The EU intends to establish a global standard for artificial intelligence supervision through the Artificial Intelligence Act, so that Europe can gain a dominant position in international intelligence competition. The "Artificial Intelligence Act" has formulated relatively reasonable rules for dealing with artificial intelligence systems, which can avoid discrimination, surveillance and other potential hazards to a certain extent, especially in areas related to basic rights. For example, the Artificial Intelligence Act lists some uses that prohibit artificial intelligence, and facial recognition in public places is one of them. In addition, it integrates the control measures to mitigate risks into the business departments where risks may occur, which can help organizations understand the cost-effectiveness of artificial intelligence systems, conduct compliance (self-review) to clarify responsibilities and obligations, and finally confidently adopt artificial intelligence. At the same time, however, the Artificial Intelligence Act also has defects in risk classification, supervision, rights protection and responsibility mechanism, etc. For example, it adopts horizontal legislation, trying to bring all artificial intelligence into the scope of supervision, without in-depth consideration of the different characteristics of artificial intelligence, which may lead to the problem that relevant risk prevention measures can not be implemented. ③

American artificial intelligence legislation: emphasizing self-regulation and supporting technological innovation. Under the global background of artificial intelligence law and policy making, the United States has gradually formed a regulatory framework based on the voluntary principle. The comprehensive regulatory measure in the United States is the Blueprint of Bill of Rights issued by the White House Office of Science and Technology Policy (OSTP) in October 2022, which aims to support the protection of citizens’ rights during the design, deployment and governance of automation systems. Under the guidance of "Blueprint of Bill of Rights", federal departments began to perform their respective duties and set out to formulate specific policies. For example, the US Department of Labor formulated the Fair Manual of Artificial Intelligence, which aims to prevent artificial intelligence from prejudicing job seekers and employees based on race, age, gender and other characteristics. The core content of the Blueprint of the Bill of Rights is five principles: a safe and effective system: the public is protected from unsafe or ineffective systems; Algorithm discrimination protection: the public should not face the discrimination of algorithms and systems, and the automation system should be used and designed in a fair way; Data privacy: the automation system should have built-in protection measures to ensure that the public’s data is not abused, and to ensure that the public enjoys the leading right to use the data; Know and explain: the public has the right to know that they are using the automation system, and understand what it is and how to produce results that have an impact on the public; Principle of substitutability: Under appropriate circumstances, the public should be able to choose not to use the automation system and use manual alternatives or other alternatives. ④ Because the above principles are not regulated, they are not binding.Therefore, the Blueprint of the Bill of Rights is not an enforceable "Bill of Rights" with legislative protection, but rather a forward-looking blueprint for governance based on future assumptions.

At present, the US Congress has adopted a relatively non-interference approach to the supervision of artificial intelligence, although the Democratic leadership has indicated its intention to introduce a federal law to supervise artificial intelligence. Chuck Schumer, the majority leader of the US Senate, proposed a new framework to guide the legislation and supervision of artificial intelligence in the future, including "who", "where", "how" and "protection", requiring technology companies to review and test artificial intelligence systems before releasing them, and provide users with the results. However, in the case of checks and balances between the two parties in the United States, the possibility of passing the law through Congress is low, and even if there is a chance to pass it, it will need several rounds of revision. In contrast, faced with the strategic competitive pressure brought by the European Union’s "Artificial Intelligence Act" and the multi-field security risks of generative artificial intelligence represented by ChatGPT, the federal agencies in the United States have taken an active intervention attitude and conducted supervision within their jurisdiction. For example, the US Federal Trade Commission (FTC) actively supervises deceptive and unfair behaviors related to artificial intelligence by implementing the Fair Credit Reporting Act and the Federal Trade Commission Act. The first investigation that OpenAI faced was conducted by FTC; In January, 2023, the National Institute of Standards and Technology (NIST) formulated the Risk Management Framework of Artificial Intelligence, which classified the risks existing in artificial intelligence in detail. In April 2023, the US Department of Commerce publicly solicited opinions on accountability measures for artificial intelligence, including whether artificial intelligence models should go through certification procedures before release.

At the local level in the United States, California passed the California Consumer Privacy Act of 2018 (CCPA) as a positive response to the EU General Data Protection Regulations. In 2023, under the impetus of Senator Bauer Kahan, California proposed the AB331(Automated decision tools) bill, requiring deployers and developers of automated decision tools to conduct an impact assessment of any automated decision tools they use on or before January 1, 2025, and every year thereafter, including the purpose of automated decision tools and a statement of their expected benefits, uses and deployment environment. New york has promulgated the Automated Employment Decision Tools Act (AEDT Law), which requires employers and professional platforms that use the Automated Employment Decision Tools in their recruitment and promotion decisions to conduct a brand-new and thorough review of the artificial intelligence system and a more comprehensive risk test to assess whether it falls within the scope of AEDT Law. In addition, legislatures in Texas, Vermont and Washington state have introduced relevant legislation, requiring state institutions to review the artificial intelligence systems being developed and used, and effectively disclose the use of artificial intelligence. It can be seen that local governments in the United States still have a positive attitude towards artificial intelligence governance. The main challenge faced by local governments is how to supervise artificial intelligence innovation in Silicon Valley with minimal obstacles. In California, for example, in order to overcome this challenge,The proposed bill has two provisions: first, the focus of artificial intelligence governance is on the rights, opportunities and risks of citizens’ access to key services, rather than the details of specific technologies, which can allow the innovative development of artificial intelligence; The second is to establish the transparency of artificial intelligence, requiring developers and users to submit an annual impact assessment to the California Department of Civil Rights, which can explain in detail the types of automation tools involved, for public access. Developers also need to develop a governance framework, detailing how the technology is used and its possible impact. In addition, the California Act also mentions the issue of private litigation rights, which provides a remedy for the protection of rights by allowing individuals to file lawsuits when their rights are violated. ⑤

At present, the focus of artificial intelligence supervision in the United States is still to apply existing laws to artificial intelligence, rather than to formulate specialized artificial intelligence laws. For example, FTC has repeatedly stated that Article 5 of the Federal Trade Commission Act "Prohibition of Unfair or Fraud" can be fully applied to artificial intelligence and machine learning systems. In fact, how to coordinate the conflict between existing laws and artificial intelligence is not only an urgent task for a certain country, but also an urgent problem facing the international community. At present, the US Congress has not reached a consensus on the federal legislation on artificial intelligence supervision, including the regulatory framework, risk classification and other specific contents. Chuck Schumer, the majority leader of the US Senate, advocates comprehensive supervision of artificial intelligence similar to that of the European Union, and speeds up the legislative process of Congress by formulating frameworks and special forums, which conflicts with the voluntary principle of the United States. Therefore, it will take a long time for the legislation of artificial intelligence supervision at the federal level to appear.

The New Challenge of Generative Artificial Intelligence to Information Content Governance

Generally speaking, the risks faced by artificial intelligence mainly come from three aspects: technology, market and specification. ⑦ The generative artificial intelligence represented by ChatGPT is close to the goal of general artificial intelligence, but it also brings new challenges to information content governance, which urgently needs forward-looking and targeted theoretical research. The main perspectives of academic analysis of the risks of generative artificial intelligence include: first, based on the general risks of artificial intelligence and combined with the unique characteristics of generative artificial intelligence, the risks in intelligent ethics, intellectual property protection, privacy and personal data protection are studied; ⑧ The second is to study the risk and governance of generative artificial intelligence in specific fields, such as the risk of sentencing deviation of generative artificial intelligence in judicial judgment; Thirdly, based on the operation structure of generative artificial intelligence, the risk problems are studied from preparation, operation to generation, and then corresponding measures are taken, such as using technology and management to correct the algorithm discrimination risk of generative artificial intelligence in operation stage. ⑨ This paper thinks that it can also be analyzed from the internal and external perspectives. The internal challenges are the challenges existing in the model itself, including input quality problems, processing problems and output quality problems. External challenges come from non-model itself, including improper use risk and legal supervision risk.

Input quality: Artificial intelligence consists of algorithm, computing power and data elements, in which data is the basis of artificial intelligence and determines the accuracy and reliability of its output to some extent. Generative artificial intelligence is a kind of artificial intelligence, so its output (generated content) will also be affected by the quantity and quality of data. Generative artificial intelligence must be trained with high-quality data. Once the data set is polluted or tampered with, the trained generative artificial intelligence may damage users’ basic rights, intellectual property rights, personal privacy and information data rights, and even produce social prejudice.

Handling process problems: In addition to data, the algorithm model used in training will also affect the output results of artificial intelligence. If the training of artificial intelligence is regarded as a cuisine, the training data affects the quality of the final cuisine with the role of "material", while the algorithm model plays its own role with the role of "recipe", and both are indispensable. Once the selected algorithm model has problems or is inconsistent with the expected purpose, even if more and better data are input, a well-behaved artificial intelligence system cannot be obtained. The discrimination and prejudice of artificial intelligence caused by machine learning algorithms and training data are collectively called pre-existing algorithm bias, which corresponds to the sudden algorithm bias caused by the emergence of new knowledge, new formats and new scenarios. Technological change has not eliminated the problem of false generation, but only packaged and concealed it. Therefore, generative artificial intelligence often has sudden algorithm bias, which further increases the risk of use and the difficulty of governance, thus requiring more accurate protective measures.

Output quality problem: In fact, the risk essentially stems from people’s insufficient ability to recognize and control things and cannot be solved in time before the problem sprouts or even erupts. From this point of view, the controllability of technology is inversely proportional to its risk, and the more difficult it is to control, the higher the risk. Large language model and thinking chain not only endow generative artificial intelligence with the ability of logical deduction, but also make its output content more and more difficult to predict. In other words, generative artificial intelligence has low controllability and high potential risks. For example, due to social and cultural differences, the output of generative artificial intelligence may be appropriate in one cultural background, but it is offensive in another cultural environment. Humans can distinguish such differences, but generative artificial intelligence may be unable to distinguish the subtle differences of culture due to the lack of cultural pre-design, and inadvertently produce inappropriate content.

Risk of improper use: Generative artificial intelligence has high intelligence, but the difficulty and cost of using it are low, which provides possible space for some people to engage in illegal activities by using its powerful power, and the risk of improper use arises from this. The People’s Republic of China (PRC) Academic Degrees Law (Draft) drafted by the Ministry of Education of China clearly stipulates the use of artificial intelligence to write dissertations and other behaviors and their handling. OpenAI said that it is training ChatGPT on sensitive words, and when users’ questions obviously violate the embedded ethics and legislation, ChatGPT will refuse to answer them. Even so, some people can still bypass the pre-set "firewall" of ChatGPT and instruct them to generate illegal content or complete illegal operations, and the risk of improper use has not been effectively curbed. In the long run, generative artificial intelligence may lead to a crisis of social trust, which will make people fall into the dilemma of being difficult to distinguish between true and false, and eventually lead to the "end of truth" and make human society enter the "post-truth era". ⑩

Legal supervision risk: the internal input quality problems, processing process problems and output quality problems of generative artificial intelligence, as well as the external improper use risks jointly push its supervision difficulty to a new peak, resulting in the legal risk of supervision failure. The legal risk of generative artificial intelligence is not limited to a specific field, but crosses multiple fields and needs the collaborative governance of multiple departments. The terms of use of generative artificial intelligence often lack authorization to deal with user interaction data, which may lead to personal privacy and national security problems, because some data in large enterprises already have public attributes, and once leakage occurs, it will not only harm the interests of enterprises, but also pose a threat to national security. In addition, the problem that the decision-making process of generative artificial intelligence is not transparent enough will also have an impact on legal supervision, and even become a decisive reason for the regulatory authorities to prohibit its deployment in specific fields.

Solution: seek multi-dimensional balance

Facing the great application value of generative artificial intelligence and the internal and external risk challenges, we need to make a reasonable choice. At present, although all countries in the world have doubts about the safety of generative artificial intelligence, they unanimously recognize its application potential in international competition, economic development, digital government construction, etc. By formulating relevant policies and regulations, they try to handle the relationship between safety and development, technological innovation and public interest, laying the premise and guarantee for the use of generative artificial intelligence. Our country is no exception. Judging from Article 1 of the Interim Measures, the purpose of this law is "to promote the healthy development and standardized application of generative artificial intelligence, safeguard national security and social public interests, and protect the legitimate rights and interests of citizens, legal persons and other organizations". Article 3 clearly points out the general attitude of legislators to the governance of generative artificial intelligence, that is, "adhere to the principle of paying equal attention to development and safety, promoting innovation and combining governance according to law." In fact, through this principle, we can find a solution that is suitable for China’s national conditions, that is, to seek a multi-dimensional balance of generative artificial intelligence governance.

First of all, we should balance the relationship between security and development. Under the overall national security concept, security is the premise of development, development is the guarantee of security, and non-development is the greatest insecurity. China must actively develop modern scientific and technological means such as generative artificial intelligence to promote economic development and social progress, and constantly enhance China’s international competitiveness. Article 3 of the "Interim Measures" points out that "the generative artificial intelligence service shall be subject to inclusive, prudent and classified supervision". Regarding specific measures, the Interim Measures also gives a plan: Articles 5 and 6 specify the direction and content of encouraging development, such as supporting industry organizations, enterprises, educational and scientific research institutions, public cultural institutions, relevant professional institutions, etc. to cooperate in technological innovation, data resource construction, transformation and application, and risk prevention of generative artificial intelligence; Articles 7 and 8 respectively put forward requirements for the safety of generative artificial intelligence from training data processing and data labeling, such as pre-training and optimization training. For example, the service provider of generative artificial intelligence should use data and basic models with legal sources, and shall not infringe on the intellectual property rights enjoyed by others according to law.

Secondly, the relationship between technological innovation and technological governance should be balanced. While taking measures to accelerate the innovative development of generative artificial intelligence, we should also recognize the internal and external risk challenges it brings and deal with them accordingly. In short, we should govern in innovation and innovate in governance to ensure that technological innovation runs on the track of rule of law. To this end, on the one hand, we should give play to the role of policies in leading economic and social development, actively encourage enterprises to carry out technological innovation by simplifying administrative licensing and reducing taxes, and at the same time guide enterprises to use their own development to promote economic and social development, and confirm their legitimate rights and interests through legislation; On the other hand, adhere to the bottom line of governance according to law, and timely regulate and deal with possible violations of laws and regulations by enterprises. Specifically, according to the provisions of Article 19 of the Interim Measures, the relevant competent departments shall supervise and inspect the generative artificial intelligence services according to their duties, and the providers shall cooperate with them according to law; For the acts of generative artificial intelligence providers that violate the Network Security Law of the People’s Republic of China, the Data Security Law of People’s Republic of China (PRC) and the Personal Information Protection Law of People’s Republic of China (PRC), they shall be fined, ordered to stop providing services, and investigated for criminal responsibility. If the existing laws and regulations can’t effectively regulate the new format of technological development, the competent department can first give a warning, informed criticism, and order it to make corrections within a time limit, and then legislate in time (the dialectical relationship between special legislation and general legislation, national legislation and local legislation needs to be considered) to ensure that there are laws to follow and administration according to law.

Finally, the relationship between corporate compliance obligations and corporate affordability should be balanced. Effective governance of generative artificial intelligence requires enterprises that carry out model training and provide generative services to undertake corresponding compliance obligations, but such obligations must be appropriate and should not exceed the affordability of enterprises. In this regard, we can find normative support from the relevant provisions of the Interim Measures: First, Article 3 requires classified and hierarchical supervision of generative artificial intelligence services, which implies that governance should be carried out according to specific risks, and different compliance obligations should be put forward for enterprises that provide different generative artificial intelligence obligations in different fields, which is quite similar to the risk-based hierarchical supervision of the Artificial Intelligence Act; Second, compared with the original draft for comments, Articles 7 and 8 have significantly reduced the compliance requirements for enterprises to develop and train generative artificial intelligence. For example, Item (4) of Article 7 has been changed to "Take effective measures to improve the quality of training data and enhance the authenticity, accuracy, objectivity and diversity of training data", and Article 8 has been changed to "Sampling and verifying the marked contents" Third, Articles 9 and 14 give the provider more flexible compliance space by redistributing the rights and obligations of service providers and users, so as to enhance their willingness to actively comply. For example, changing "content producer responsibility" to "network information content producer responsibility",So that enterprises providing services do not have to bear the responsibility for users’ malicious abuse of illegal content generated by generative artificial intelligence.

Generative artificial intelligence technology has caused great waves in many industries, such as commerce and trade, news communication, etc. How to effectively supervise and make it serve human society is a realistic proposition that all countries should consider and solve for a long time. The artificial intelligence supervision mode chosen by different countries reflects specific social values and national priorities, and these different requirements may conflict with each other, such as paying attention to protecting users’ privacy and promoting technological innovation, thus creating a more complicated supervision environment. Although there are many differences between the EU’s "safety first, giving consideration to fairness" and the US’s "emphasizing self-regulation and supporting technological innovation", there is also a consensus part, which can more or less promote the technological innovation, safe use and legal governance of generative artificial intelligence. At the same time, transparency and interpretability will be the key to abide by emerging regulations and cultivate trust in generative artificial intelligence technology. The legislative trends in Europe and America also remind China to carry out artificial intelligence legislation at the national level as soon as possible, determine the basic principles of artificial intelligence governance, as well as the risk management system, the distribution of main responsibilities, legal responsibilities, etc., coordinate the national governance layout, and give full play to local enthusiasm, and try first through local legislation to avoid being unavailable and accumulate experience for national artificial intelligence legislation.

(The author is a professor and doctoral supervisor at Guanghua Law School of Zhejiang University)

[Note: This article is the phased achievement of the major project of the National Social Science Fund "Research on Establishing and Perfecting China’s Network Comprehensive Governance System" (project number: 20ZDA062)]

[Notes]

①Ouyang L,Wu J,Jiang X,et al."Training language models to follow

instructions with human feedback",Advances in Neural Information

Processing Systems,2022(35),pp.27730-27744.

②Natali Helberger,Nicholas Diakopoulos."ChatGPT and the AI

Act",Internet Policy Review,2023,12(1).

③ Zeng Xiong, Liang Zheng and Zhang Hui: Regulation Path of Artificial Intelligence in EU and Its Enlightenment to China —— Taking the Artificial Intelligence Act as the Analysis Object, E-government, No.9, 2022.

④The White House,"Blueprint for an AI Bill of Rights",October

2022.

⑤Friedler, Sorelle, Suresh Venkatasubramanian, Alex Engler,"How

California and other states are tackling AI legislation",Brookings,March

2023.

⑥Müge Fazlioglu."US federal AI governance: Laws, policies and

strategies",International Association of Privacy Professionals,June

2023.

⑦ Cheng Le: "Research on the Development Trend of Artificial Intelligence and Guiding Ideas of Standardization", National Governance, No.6, 2023.

⑧ Cheng Le: The Legal Regulation of Generative Artificial Intelligence —— From the Perspective of ChatGPT, On Politics and Law, No.4, 2023.

9. Liu Yanhong: Three Security Risks and Legal Regulation of Generative Artificial Intelligence —— Taking ChatGPT as an Example, Oriental Law, No.4, 2023.

Attending Zhang Guangsheng: National Security Risks and Countermeasures of Generative Artificial Intelligence, People’s Forum Academic Frontier, No.7, 2023.

Yoon-hyun Chang, director of Coffee, tells a play to Kim So-yeon.

Kim So-yeon studio photo

Kim So-yeon hid his face and snickered.

Movie network news(Compile /Ben) According to South Korean media reports, on March 29th, the Korean era spy war blockbuster "Coffee", which was being filmed on location in Jinshandong, Paju City, Gyeonggi Province, was accepted by the Korean media, and the mystery of the film was also unveiled. Reporters also made contact with the film director Yoon-hyun Chang, the film protagonist Kim So-yeon and Joo Jin Mo.

In an interview with reporters, Director Yoon-hyun Chang made his own speech about the opening of the film, as well as his calm attitude towards Li Duohai’s resignation.

After the film, Yoon-hyun Chang, who returned to the Korean film scene after three years, is undoubtedly the focus of the reporters on the spot. When a reporter asked about the resignation of Li Duohai, the original female No.1 in Coffee, and the tortuous experience that the film almost failed to start shooting smoothly, Director Zhang unexpectedly said that he was "calm". But he also revealed: "At first, I made an agreement with all the actors to work hard together, and then Li Duohai resigned. I really feel very sorry. It seems that there is no fate with her. "

At the same time, director Yoon-hyun Chang also made a speech about his choice of Kim So-yeon to replace Li Duohai as the heroine. He said that he was satisfied to find such a suitable actor for the role. "At first, I was worried that Kim So-yeon, who hasn’t made a film for a long time, would become like a newcomer with poor acting skills, but it turns out that she has outstanding understanding of works and a perfect state, and is an actor who is always prepared." Yoon-hyun Chang also revealed that Kim So-yeon was very nervous at first because it was the first time for Kim So-yeon to come into contact with historical films. However, judging from his recent performance, Kim So-yeon’s outstanding performance made him full of expectations.

Although Kim So-yeon made his debut as early as 1994, his only films were Campus Transformation in 1997 and two feature-length films in 2005. Kim So-yeon’s brand-new image, which challenged Korean costume films for the first time, has attracted great attention from fans after six years of violating the screen.

In addition, for Joo Jin Mo, Hee-soon Park and Liu Shan, Yoon-hyun Chang also expressed admiration: "The four main roles they played in the film are not only intertwined in fate, but also key figures in promoting the development of the plot. The leading actors have great determination to perform the play well, and at the same time, they are quite prepared. Everyone can look forward to their image and performance on the big screen. "

At the same time, the film producer also told the media that there are about 30% fictional elements in the content of Coffee, but the general plot is in line with real historical events. For example, in the film, the role played by actress Liu Shan comes from Pei Zhenzi, a famous Korean traitor in history. In addition, the producer also refuted the news that "the film investment has shrunk on a large scale" and "shooting cannot be carried out", and said that the above reports were completely groundless.

Regarding the news that the actor Liu Shan, who plays the role of "Zhen Zi" in the film, is about to get married, the producers also revealed that they were shocked when they learned the news three weeks ago. At the same time, he also said that the filming period of Coffee is expected to reach three months, and Liu Shan, who is about to get married in May, should be very busy at that time.

The film "Coffee" is adapted from the original work "Russian Coffee" by Korean novelist Kim Joo-hwan. It tells the story of the last king of Korea, Emperor Gaozong, who sought refuge in the Russian Embassy in order to escape Japanese aggression. In this film, it also reveals the battle plan behind this incident that Russia and the Japanese Empire sent spies to sneak into the court to assassinate Emperor Gaozong in order to manipulate the situation in Korea.

"Myopia" has become an "epidemic disease" affecting teenagers in China. How to prevent and control juvenile myopia? On February 22nd, at the 19th (Shanghai) International Glasses Exhibition, the relevant person in charge of the international optical brand "ZEISS" shared Zeiss’s knowledge and practice in the field of prevention and control of juvenile myopia.

According to the latest research report of the World Health Organization, the number of myopia patients in China is as high as 600 million, which is almost half of the total population of China. Among them, the myopia rate of primary school students has reached 45.7%, and the myopia rate of adolescents ranks first in the world.

In August 2018, the Ministry of Education and other eight departments issued the "Implementation Plan for Comprehensive Prevention and Control of Myopia among Children and Adolescents", which clearly stated that from this year, prevention and control of myopia will be included in the performance appraisal of local governments, and prevention and control of myopia among children and adolescents will become a national strategy.

Most children’s myopia is caused by axial myopia, which is axial myopia. Zeiss Changle lens with standard monofocal lens can help to restrain children’s axial elongation and delay the development of myopia.